Upgrading To

18c / 19c / 20c: HOW DO I GET THERE?

In my prior blog post, I explained in considerable detail how to plan for your Oracle database upgrade and high-level options that are available. Hopefully, that has provided enough information on what your high-level path will include, especially the possible need for new hardware, the demands of a concurrent OS upgrade, the impact of multitenant features on your upgrade plan, and most importantly, how much downtime your applications can withstand.

If you have pulled together answers to these questions, maybe you have drafted an option or two for your upgrade path. You and your team are now ready to start with some sandboxing or technical tests, so let’s walk through each of the upgrade methods in more detail.

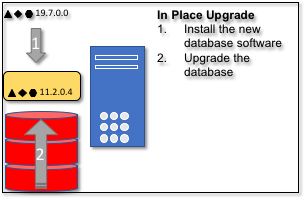

In Place Upgrade in Detail

For in-place upgrades, you’ve got three basic tools to choose from, and your choice will really depend on the expertise of your technical database staff as well as what exact options your database currently uses:

-

- AutoUpgrade Tool. This is a new Java based command line tool from Oracle that does pre-upgrade checks. Can remediate a number of pre-upgrade items. Then will perform all the upgrade steps. See the MOS note on the AutoUpgrade Tool (Doc ID 2485457.1) for complete details.

- Database Upgrade Assistant (DBUA). This tool has been around for a number of years, it is also Java based but is a GUI tool for performing pre-upgrade checks, and the upgrade process. DBUA can also be run with a response file in non-GUI / non-interactive mode.

- Manual Upgrade. This basically involves careful adherence to the document and notes manually and performing all the pre-upgrade, upgrade steps, and scripts interactively command line.

Oracle recommends the first two methods as the tools will check at each step in the upgrade process to make sure that things are ready and working. The AutoUpgrade tool also has the ability to pick up the upgrade if it stopped in the middle and perform multiple database upgrades in parallel.

Note: Be sure to consult My Oracle Support (MOS) Note 1352987.1, FAQ : Database Upgrade And Migration, for additional clarification on the differences between and advantages of these three options. It’s a great central clearing-house for all information on just about any Oracle migration and upgrade issue.

How Long Will It Take?

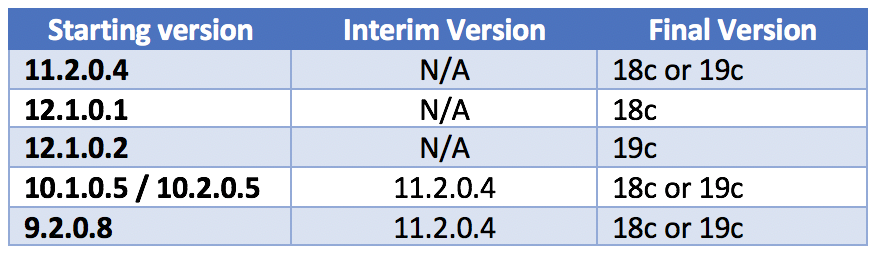

How much time will this take? Well, it could take a few hours or much longer. You should plan on staging all the Oracle software prior to your upgrade, and make sure it’s patched to the appropriate release level(s) you will need, especially if you are doing a multi-hop upgrade. Remember to include time zone data updates in your plans!

It’s also important to keep in mind that during the upgrade, the database will be updating core metadata, Java, and built-in PL/SQL. The overall size of your database, how much audit and performance history data has been retained, and the number of objects in the database will define the overall time needed. It almost goes without saying. The speed of your hardware – including available CPU cycles and memory – will greatly affect the time to complete a successful upgrade.

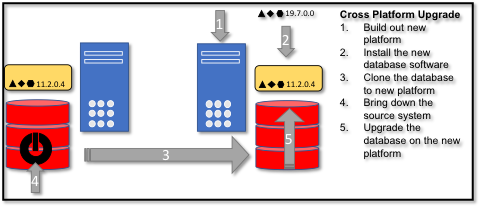

Cross-Platform Upgrade in Detail

Upgrading across platforms is really two smaller projects combined: The first phase involves getting a copy of the database from one platform to another, and the second phase is the actual in-place upgrade. Since we talked about in-place upgrade already, let’s focus on getting a copy of the database from one platform to another.

Oracle offers a number of tools for platform migration:

- Data Pump offers the ability to move the data in the database or migrate database tablespaces from one platform to another.

- Recovery Manager (RMAN) includes the capability to copy all the physical files of a database from one platform to another.

- Data Guard allows you to perform real-time replication of changes between two databases that have the same basic physical set of files / layout.

Here again, the size of your database being upgraded is a key determining factor, so it’s good to know that all three of these tools allow for incremental data transfer. You can do the initial large copy of data prior to your upgrade and then apply incremental changes right before your upgrade. Each tooling will have a different set of requirements and will of course be impacted by the database version and platforms you are running.

The general process involves five steps:

- Build out your new platform, hardware, operating system, and storage. This includes setting up all your shop specific things.

- Install the Oracle software. At minimum you will need your target version. You may also need the source version, and interim versions as well to perform the full upgrade.

- Clone or copy the database using the proper Oracle tool to the new system. Perform pre-upgrade steps depending on the tool being used.

- Bring down the source system and copy the final data to synchronize source and target databases, hopefully using the incremental option to limit the amount of application downtime.

- Finally, perform the upgrade as an in-place upgrade.

Note: If you are changing operating system brand or server processor architecture during your upgrade, be sure to review carefully MOS Note 733205.1, Migration Of An Oracle Database Across OS Platforms (Generic Platform) before proceeding – it’s chock-full of helpful advice that shouldn’t be ignored.

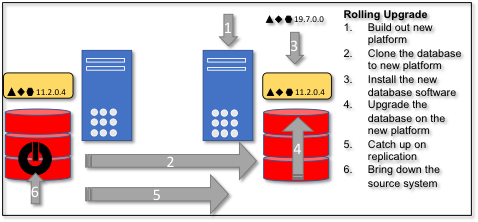

Real-Time or Rolling Upgrades

These tend to be the most complex type of upgrades and are driven by the need for the least amount of downtime. Both of these tools require additionally licensed products from Oracle. To achieve the low amount of downtime we need to use a technology that does logical real-time or near real-time data replication from the source platform to the destination newly upgraded platform.

This leaves us with two main tools:

- Active Data Guard. Using a transient logical standby, you can reduce your down time, though there is a lot of it depends. There are a lot of technical requirements between the source and target platform as well as operationally complexity to achieve a low downtime scenario.

- GoldenGate offers full logical replication allows for data changes to be copied from the source to the destination database even if there are dramatically different from a physical or infrastructure design.

Just like cross-platform upgrade, this requires two separate systems that are capable of running the full application load. Reducing downtime means running both the source and target platforms for a generally long period of time. In a cross-platform upgrade, the source and target systems may only be running in parallel for a week or two which includes cut-over and testing of the upgrade.

To achieve real-time or rolling upgrades, the different environments usually tend to be running simultaneously for many weeks, and possibly even months. Of course, the larger the system being migrated, the longer the effort to upgrade, and the longer the upgrade process continues, the greater the tendency for the source and destination environments to become out of sync. Creating the initial new platform copy of a multi-terabyte database could take many hours, perhaps even days; once instantiated, it might take a few days for logical changes to be captured and propagated at the destination, especially if special datatypes like LOBs are involved.

Once the new copy is created, your next step is to upgrade the new copy to its final version, and then once again recapture any changed data at the destination. These processes often run for one to two weeks alone. Prior to upgrading you will probably want to vet the platform out before it becomes your production environment. After initial data copy you tend to want to do some additional health checks and make sure the system runs smoothly. This can quickly become a one-month process or more.

For Your Consideration: Multitenant

If you have chosen to take the leap to the Oracle multitenant architecture, you can choose to introduce CDB / PDB architecture during the migration and upgrade process. There are two options to introduce the advantages of a pluggable databases. One option is for your upgraded database to becomes a single PDB in a CDB. The second option is where your existing database can be plugged in as a PDB into an existing CDB.

Realize that this adds some complexity to the process, which of course varies by the path and methodology of upgrade chosen. As I noted in the last article, it’s definitely not smart to learn multitenancy for the first time in the middle of what might be an already complex migration / upgrade process. In my experience, this is especially true when you’re trying to figure out a performance issue without understanding how to point diagnostic tools like ASH and AWR reports at the right PDB … or even worse, misinterpreting diagnostic results because you were monitoring the CDB instead of a PDB.

One Final Step Back …

Even though you may have planned out your migration and upgrade strategy at the database level, let’s take one more step back and consider the whole reason the database exists: the application(s) it supports.

Your application will generally drive the path and type of upgrade you choose. Some commercial off the shelf products like E-Business Suite or JD Edwards will only support specific types of upgrades; sometimes outages for upgrades are inevitable due to application level patches or changes that are required. Other applications might be so simple or straightforward that you just copy the data from the old database to the new one. It’s critical to consider all of these complexities in the context of the migration / upgrade process, especially the amount of downtime your applications (and the user community they support) can tolerate before, during and after the process is complete.

As I’ve said in my previous blog posts: If not now, then when? If not you and your team, then who? Make some space on a sandbox or development server. Clone a backup of your database there, install 19c, and experiment with these upgrade tools and methods - at least you will have some ideas of what the process is like, and what you can expect.

Carpe indicii! Seize the data.

About the author:

SUBMIT YOUR COMMENT